Category: Features

-

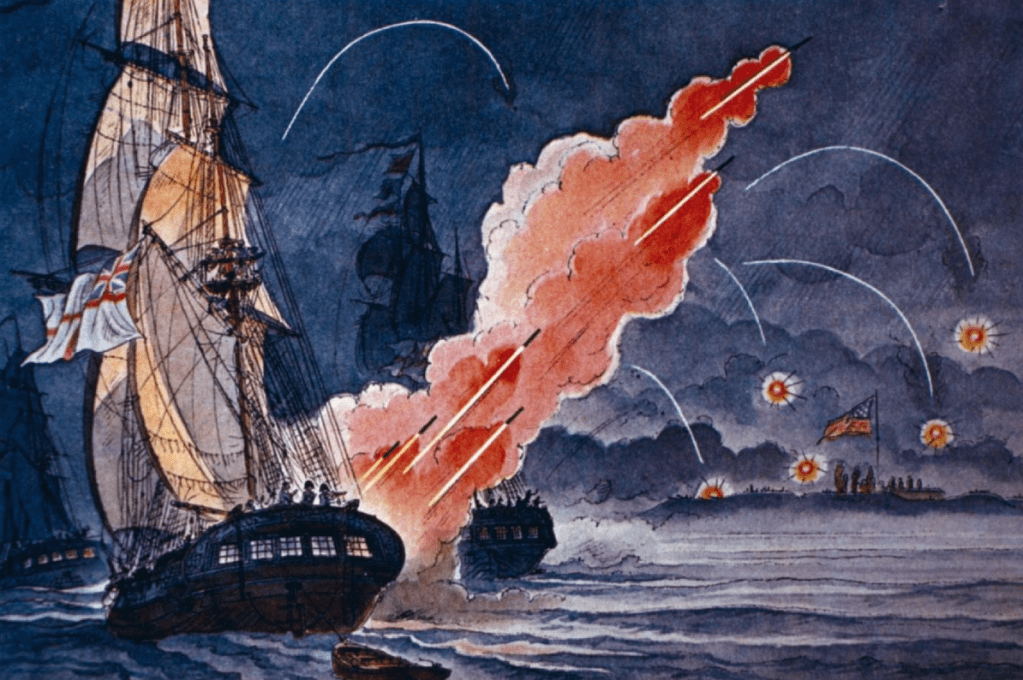

The Fort Which Birthed a Nation – The Defence of Fort McHenry, and the Penning of the U.S National Anthem

Sam Mackenzie details the origins of the American national anthem.

-

The Wickedest Man in the World: A Brief Biography of Aleister Crowley’s Immortality

Manahil Masood explores why Aleister Crowley is still remembered and talked about today.

-

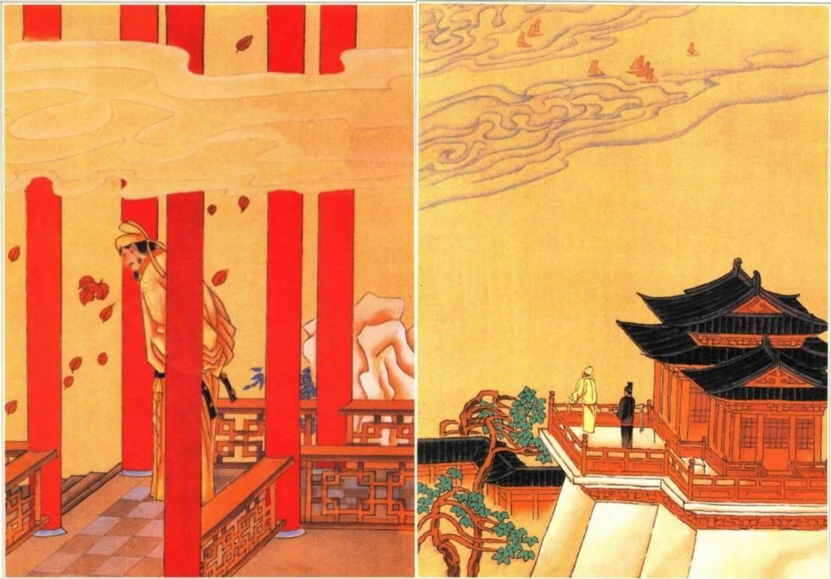

The Emperor and His Moonlit Persona: The Evocative Presence of the Moon in Li Yü’s Ci Poetry

The poem “A Joyful Rendezvous” by Li Yü reflects his emotional turmoil during captivity. Peiqi An analyses its lunar imagery and how it symbolises lost grandeur, personal loss, and the ephemeral nature of his former kingdom.

-

The Second Sino-Japanese War: Why World War Two Began in 1937

Owen James argues that both China and Japan were crucial players in the outset of World War Two, challenging the Eurocentric narrative of the conflict’s beginnings.

-

A History of Skateboarding

Elizabeth Hall explores the emergence of skateboarding, including how it gained mainstream popularity and a notable cultural identity.

-

A Cabinet of Rivals – How Theresa May’s plan to emulate Lincoln tore her government apart

Sam Mackenzie discusses how Theresa May sought to emulate Abraham Lincoln’s political strategies, and where she fell short in this regard.

-

The First Populist Movement in the UK? Ulster Unionism and the Home Rule Crisis, 1910-1914

The article by Darcie Rogers explores Ulster Unionism as Britain’s first populist movement, emerging during the Home Rule crisis in the early 1910s. It highlights the movement’s grassroots participation, militaristic tendencies, and religious undertones, emphasizing its opposition to perceived corruption by the Liberal government and Irish nationalists, shaping Northern Ireland’s political landscape.

-

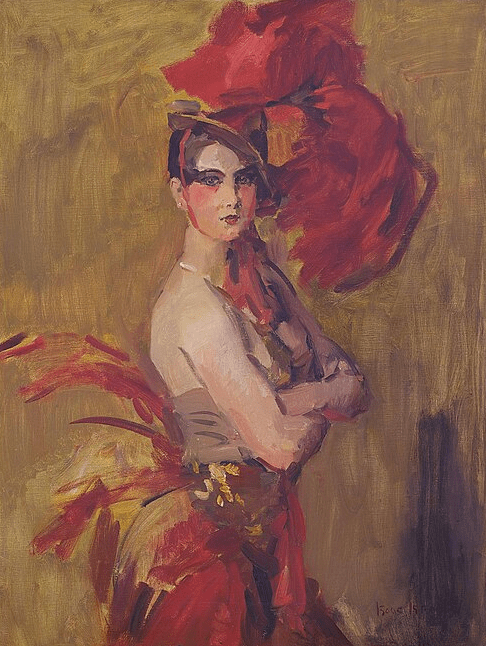

The Evolution of the Showgirl: The History Behind the Las Vegas Stage’s Iconic Women

Millie Oliver details the origins of the showgirls who are so symbolic of Las Vegas, and how modern representations of showgirls demonstrate their cultural evolution.